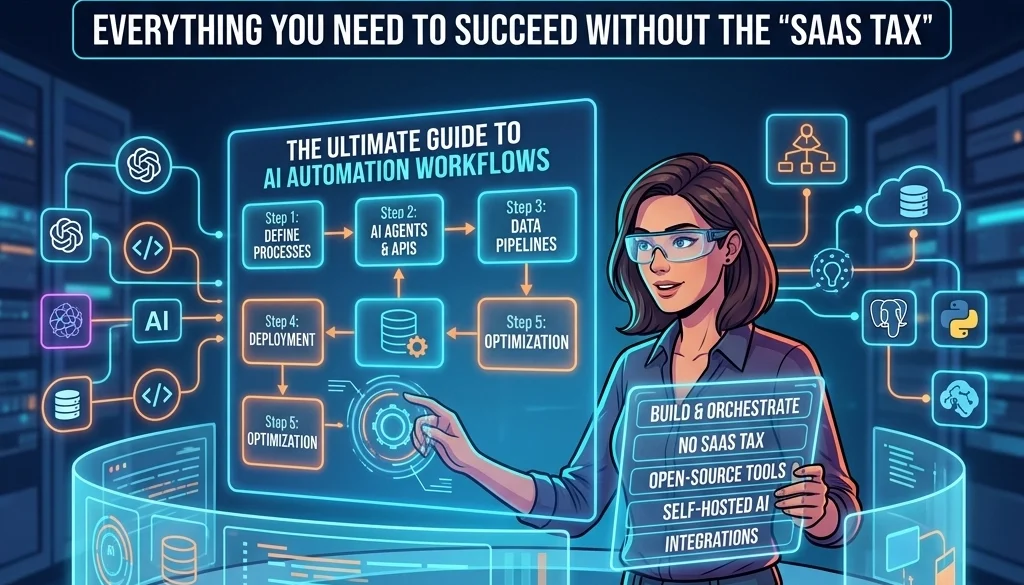

The Ultimate Guide to AI Automation Workflows: Everything You Need to Succeed Without the “SaaS Tax

Current State of AI Automation

Business operations in 2026 are defined by the transition from static automation to dynamic, agentic workflows. Traditional automation relied on rigid "If This, Then That" logic. Modern ai automation workflows utilize Large Language Models (LLMs) to interpret intent, handle unstructured data, and execute complex sequences across multiple platforms.

The primary obstacle to scaling these systems is the "SaaS Tax." This term describes the cumulative cost of per-task execution fees, seat-based licensing, and data egress charges imposed by proprietary automation platforms. For organizations processing thousands of transactions daily, these costs often exceed the value provided by the automation itself.

Defining the SaaS Tax in 2026

The SaaS Tax manifests in three primary areas:

- Volume-Based Pricing: Platforms that charge per "step" or "task" penalize efficiency and scale.

- Model Markups: Third-party wrappers that charge a premium on top of standard LLM token costs.

- Data Silos: Proprietary ecosystems that limit the ability to migrate workflows to internal infrastructure.

To automate business operations with ai effectively, a shift toward self-hosted infrastructure and open-source deployment is required.

Core Framework of AI Automation Workflows

A functional AI workflow consists of five distinct stages. Maintaining modularity between these stages allows for the replacement of individual components without rebuilding the entire system.

1. Trigger and Event Detection

Workflows are initiated by specific events. These include:

- Webhooks: Real-time data signals from CRMs, ERPs, or payment gateways.

- Polling: Scheduled checks of legacy databases or external APIs.

- Human-in-the-loop: Manual triggers via chat interfaces or dashboard buttons.

2. Data Preprocessing and Context Retrieval

Raw data is rarely ready for LLM consumption. Preprocessing involves:

- Cleaning: Removing noise and formatting errors.

- RAG (Retrieval-Augmented Generation): Pulling relevant documentation or historical data from a vector database to provide the AI with necessary context.

- Filtering: Identifying and removing sensitive PII (Personally Identifiable Information) before transmission.

3. Logic and Intelligence (The LLM Layer)

The core processing unit. In 2026, the trend has shifted toward self-hosting llms to ensure data privacy and eliminate per-token markups. The LLM performs:

- Intent Classification: Determining the next step based on user input.

- Extraction: Turning unstructured text into structured JSON.

- Reasoning: Determining which external tools are required to fulfill the request.

4. Action and Tool Invocation

The LLM generates a command or a function call. The system then executes this against external software via API. Examples include:

- Updating a lead status in a custom CRM.

- Generating and sending a legal contract.

- Deploying code to a staging environment.

5. Post-processing and Verification

The final stage ensures the output meets quality standards.

- Schema Validation: Ensuring the output matches the required data format.

- Human Review: For high-stakes operations, the system flags the output for manual approval before execution.

- Logging: Recording the entire transaction for audit and training purposes.

Advanced Strategies for Connecting Disparate Systems

Integrating legacy software with modern AI agents is the primary technical challenge in business automation. Disparate systems often lack standardized APIs or use incompatible data formats.

Middleware as a Translation Layer

Custom middleware acts as a bridge. It converts proprietary data formats from legacy systems into standardized JSON that AI models can interpret. This approach allows companies to automate business operations with ai without replacing their foundational enterprise software.

The Role of Open-Source Orchestrators

Tools like n8n, LangChain, and CrewAI provide the framework for connecting various APIs without the recurring costs of proprietary platforms. By deploying these on private servers, businesses maintain control over their logic and data.

Eliminating the SaaS Tax Through Self-Hosting

The most effective method to avoid the SaaS Tax is the deployment of local or private cloud infrastructure. Marketrun specializes in open source deployment for this purpose.

Benefits of Self-Hosting LLMs

- Cost Predictability: Fixed infrastructure costs instead of variable token fees.

- Privacy: Data never leaves the corporate network, ensuring compliance with global regulations.

- Customization: Models can be fine-tuned on internal datasets without exposure to third-party providers.

Strategic Infrastructure Selection

Choosing between on-premise hardware and private cloud instances depends on the required latency and data volume. For high-frequency ai automation workflows, dedicated GPU clusters provide the necessary throughput to handle complex reasoning tasks in real-time.

ROI Analysis: Automation vs. SaaS Subscription

Evaluating the success of an AI automation strategy requires a focus on Return on Investment (ROI) rather than just initial deployment costs.

| Metric | Proprietary SaaS | Self-Hosted / Custom |

|---|---|---|

| Setup Cost | Low | Medium/High |

| Scaling Cost | High (Exponential) | Low (Marginal) |

| Data Ownership | Limited | Total |

| Customization | Templated | Unlimited |

| Security | Shared Responsibility | Internal Control |

Organizations often find that while the initial investment in custom software is higher, the break-even point occurs within 6 to 12 months due to the elimination of monthly "per-task" fees.

Real-World Application: Automating Business Operations with AI

Example: Automated Procurement and Invoicing

- Trigger: An email arrives with an attached invoice.

- Preprocessing: OCR (Optical Character Recognition) extracts text; RAG checks the vendor against the approved database.

- Intelligence: The LLM compares invoice line items against the original Purchase Order.

- Action: If data matches, the system triggers a payment via the banking API. If it deviates, it flags the invoice for human review in Slack.

- Log: The transaction is recorded in the internal ERP.

This workflow, when built on custom infrastructure, operates at the cost of electricity and server maintenance, rather than a $0.50 per-invoice fee charged by specialized SaaS vendors.

Implementing the Solution with Marketrun

Marketrun provides the technical expertise to design, build, and deploy these systems. The focus is on creating sustainable, high-performance ai-automations that scale with the business.

Our Approach:

- Audit: Mapping existing manual processes and identifying high-impact automation targets.

- Development: Building custom connectors for disparate systems and legacy software.

- Deployment: Setting up self-hosted LLMs and open-source orchestrators.

- Optimization: Continuous monitoring and refinement of workflow logic to ensure peak performance.

For companies operating across borders, we offer specialized consulting for US clients and India-based operations, ensuring localized data compliance and cost-effective development.

Future-Proofing AI Workflows

The landscape of AI is volatile. To ensure longevity, workflows must be model-agnostic. By using standardized orchestration layers, a business can swap a Llama-series model for a GPT-series model (or vice versa) without rewriting the integration logic.

Successful ai automation workflows are those that prioritize flexibility and ownership. Avoiding the "SaaS Tax" is not merely a cost-saving measure; it is a strategic requirement for maintaining operational independence in an AI-driven economy.

For detailed pricing on custom implementation and infrastructure setup, visit our pricing page or explore our blog for more technical insights into self-hosting and custom development.