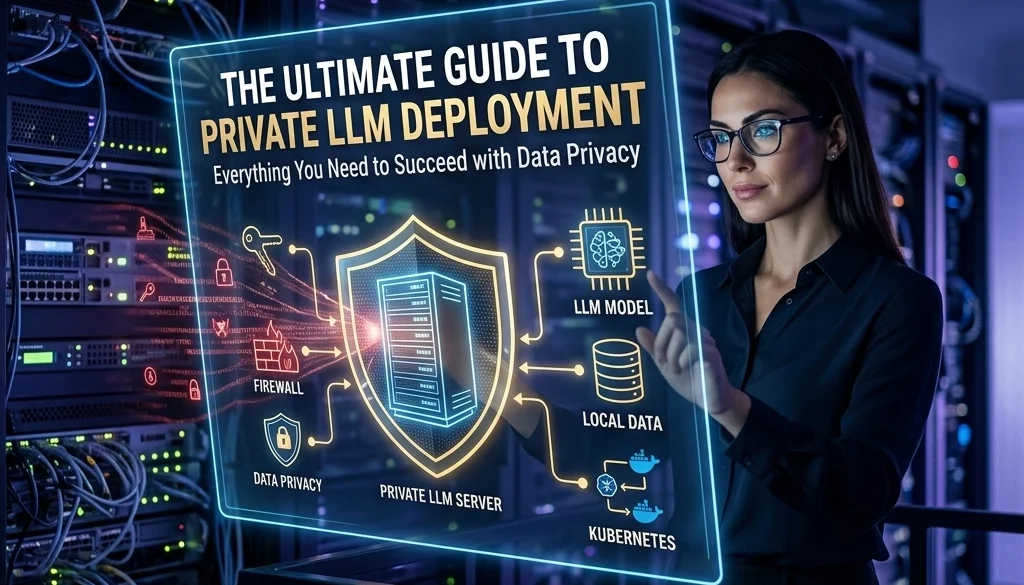

The Ultimate Guide to Private LLM Deployment: Everything You Need to Succeed with Data Privacy

Private LLM Deployment: Core Concepts and Rationale

Private Large Language Model (LLM) deployment refers to the hosting of generative AI models within a restricted environment. This environment is controlled by the organization, either on-premises or within a virtual private cloud. This approach differs from public API usage where data is transmitted to third-party providers such as OpenAI or Google.

The primary objective of private LLM deployment is the preservation of data sovereignty. In a public API model, input data is processed on external servers, introducing risks regarding data retention, model training usage, and unauthorized access. Private deployment ensures that sensitive information remains within the organizational perimeter.

Comparative Analysis: Public vs. Private Deployment

| Feature | Public API (OpenAI/Claude) | Private LLM Deployment |

|---|---|---|

| Data Privacy | Data sent to third party | Data remains on-site/private cloud |

| Customization | Limited to fine-tuning APIs | Full model and architecture control |

| Compliance | Dependency on provider terms | GDPR/HIPAA/SOC2 compliant by design |

| Latency | Dependent on internet and provider load | Dependent on local hardware capacity |

| Cost Structure | Pay-per-token (Variable) | Capital/Operational expenditure (Fixed) |

Data Security and Compliance Frameworks

Organizations operating in regulated industries must adhere to specific data handling standards. Private LLM deployment is a primary solution for meeting these legal obligations.

GDPR Compliance

The General Data Protection Regulation (GDPR) requires strict control over the processing of personal data of EU citizens. Private deployment allows for the implementation of "Privacy by Design." Data is not transferred across international borders unless explicitly configured by the internal infrastructure team.

HIPAA Compliance

For healthcare entities in the United States, the Health Insurance Portability and Accountability Act (HIPAA) necessitates the protection of Protected Health Information (PHI). Standard public AI models often lack the necessary Business Associate Agreements (BAAs) or technical safeguards. Local deployments enable complete audit trails and hardware-level encryption required for HIPAA.

Infrastructure and Hardware Requirements

The execution of LLMs requires specific computational resources. The scale of the deployment is determined by the parameter count of the chosen model (e.g., 7B, 13B, 70B, or 400B+).

GPU Specifications

NVIDIA hardware is the current industry standard due to the CUDA ecosystem.

- Small-Scale Models (7B – 13B): Require 1x NVIDIA A10/L40 or RTX 4090 (24GB VRAM).

- Medium-Scale Models (30B – 70B): Require 2x to 4x NVIDIA A100 (80GB) or H100.

- Enterprise-Scale Models: Require clusters of H100 or H200 GPUs connected via InfiniBand for high-speed data transfer.

Memory and Storage

- System RAM: A minimum of 2x the model's weight size is required for stable operation. For a 70B model, 256GB+ RAM is recommended.

- Storage: High-speed NVMe SSDs (minimum 2TB) are necessary to minimize model loading times and facilitate rapid vector database queries.

Security Architecture and Access Control

A secure deployment architecture isolates the model from the public internet and restricts internal access.

Containerization

The use of Docker and Kubernetes is mandatory for reproducible and isolated environments. Containers encapsulate the model, dependencies, and environment variables, preventing conflicts with the host system and enhancing security.

Role-Based Access Control (RBAC)

Access to the LLM and its underlying data must be governed by RBAC. Only authorized service accounts or personnel should interact with the inference endpoints. Integration with existing identity providers (LDAP, Active Directory, or OIDC) is a standard requirement.

Encryption

Data must be encrypted in three states:

- At Rest: Using AES-256 for model weights and stored logs.

- In Transit: Using TLS 1.3 for all API calls between the application and the inference server.

- In Use: Utilizing Trusted Execution Environments (TEEs) where hardware permits.

Software Deployment Architecture

The software stack for private LLM deployment includes the inference engine, the API gateway, and the orchestration layer.

Inference Engines

The inference engine is responsible for executing the model weights to generate text.

- vLLM: Optimized for high-throughput and PagedAttention. Suitable for production environments serving multiple users.

- Ollama: Utilized for rapid local prototyping and smaller deployments.

- NVIDIA NIM: A set of easy-to-use microservices designed to accelerate generative AI deployment across enterprises.

API Gateway

A reverse proxy (such as NGINX or Traefik) is positioned in front of the inference engine. This layer handles:

- SSL/TLS termination.

- Rate limiting to prevent hardware saturation.

- Request logging and monitoring.

Organizations seeking to implement these stacks often utilize custom software development services to ensure seamless integration with legacy systems.

Data Management and Retrieval-Augmented Generation (RAG)

To provide context-aware responses without constant fine-tuning, RAG architecture is implemented. This involves a vector database that stores company-specific knowledge.

Vector Databases

Embeddings are generated from internal documents and stored in specialized databases:

- Milvus: Scalable, cloud-native vector database.

- ChromaDB: Lightweight and suitable for smaller, focused datasets.

- pgvector: An extension for PostgreSQL, allowing vector searches within a relational database framework.

The RAG Workflow

- Ingestion: Documents are cleaned and converted into chunks.

- Embedding: Chunks are processed through an embedding model.

- Storage: Vectors are stored in the vector database.

- Retrieval: User queries trigger a similarity search in the database.

- Generation: The LLM receives the query plus the retrieved context to produce an accurate response.

Implementation Workflow

The transition to private LLM deployment follows a structured sequence:

- Requirement Definition: Identify the specific business problem. Define accuracy, latency, and budget metrics.

- Model Selection: Choose between open-source models such as Llama 3, Mistral, or Falcon based on the task complexity.

- Infrastructure Provisioning: Acquire hardware or configure private cloud instances.

- Environment Setup: Deploy containerized inference engines and vector databases.

- Integration: Connect the LLM API to internal applications.

- Validation: Perform A/B testing against public benchmarks and internal quality standards.

For detailed technical guidance, refer to the self-hosting LLMs 2026 guide.

Custom AI Solutions for SMBs

Small and Medium Businesses (SMBs) often face resource constraints that complicate private deployment. However, the long-term cost of public API tokens and the risk of data leaks make private llm deployment a viable investment.

Cost Efficiency

While the initial setup cost for local hardware or reserved cloud instances is higher, the marginal cost per token is zero. For high-volume applications, the ROI is typically realized within 12 to 18 months.

Strategic Advantage

SMBs utilizing custom ai solutions for smbs can develop proprietary intelligence that remains an internal asset. This prevents the "vendor lock-in" associated with proprietary third-party platforms.

Monitoring and Continuous Optimization

Post-deployment, the system requires continuous monitoring to maintain performance levels.

Performance Metrics

- Time to First Token (TTFT): The duration before the user sees the start of a response.

- Tokens Per Second (TPS): The overall speed of text generation.

- GPU Utilization: Monitoring heat, power consumption, and memory overhead.

Model Quantization

To reduce hardware requirements, quantization techniques (INT8, FP16, or AWQ) are applied. These techniques compress the model weights with minimal loss in accuracy, allowing larger models to run on smaller GPUs.

Scheduled Maintenance

Updates to the base models and vector databases must be handled through a CI/CD pipeline. This ensures that the system remains secure against new vulnerabilities and benefits from advancements in model efficiency.

Organizations interested in exploring these technologies can view Marketrun's open-source deployment solutions for more information on implementation strategies.

Conclusion on System Integrity

The deployment of private LLMs is a technical requirement for organizations prioritizing data privacy and regulatory compliance. By transitioning away from public APIs, businesses gain full control over their AI infrastructure, data security, and long-term operational costs. Success in this domain is achieved through rigorous infrastructure planning, robust security architecture, and the implementation of modern inference and RAG frameworks.

For further information on pricing and project initiation, visit the Marketrun pricing page.