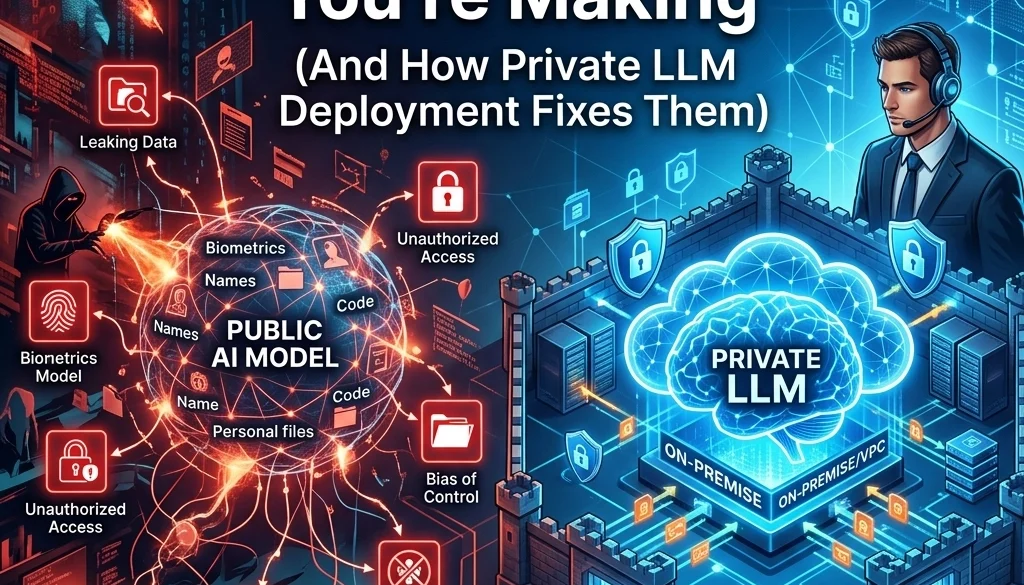

7 AI Security Mistakes You’re Making (And How Private LLM Deployment Fixes Them)

Identification of AI Security Vulnerabilities

Current enterprise operations involve the integration of Large Language Models (LLMs) into internal workflows. This integration introduces specific security vectors. Research indicates that 89% of organizations deploy AI systems without established red-teaming protocols. Public API usage remains a primary source of data exposure.

The following sections detail seven specific security failures and the corresponding mitigation provided by private LLM deployment.

1. Data Exfiltration via Public API Channels

Organizations utilizing public AI providers transmit internal data to external servers. This process involves the transfer of proprietary code, financial records, and strategic documents to third-party infrastructure. Once data is transmitted to a public provider, the organization loses control over the storage and utilization of that data.

Risk Factor: Public providers may utilize input data for model retraining. This results in the potential for sensitive information to be generated in response to queries from unrelated users.

Private LLM Resolution: Private deployment ensures that data remains within the organizational firewall. Data does not exit the internal network. Interactions are confined to local or dedicated cloud instances managed by the organization. Private LLM deployment eliminates the third-party data transit requirement.

2. Regulatory Non-Compliance (GDPR and HIPAA)

Public LLMs often fail to meet the strict data residency and processing requirements mandated by GDPR (General Data Protection Regulation) and HIPAA (Health Insurance Portability and Accountability Act). Data processing in regions outside of the legal jurisdiction of the organization leads to compliance violations.

Risk Factor: Submitting Protected Health Information (PHI) or Personally Identifiable Information (PII) to a public API constitutes a breach if the provider lacks a Business Associate Agreement (BAA) or does not meet regional residency standards.

Private LLM Resolution: Hosting models on local infrastructure or sovereign cloud environments allows for total control over data residency. Systems are configured to adhere to specific regulatory frameworks. Private instances allow for the implementation of custom AI solutions for SMBs that require local data processing to maintain legal standing.

3. Prompt Injection and Input Manipulation

Public-facing AI interfaces are susceptible to prompt injection. This occurs when a user provides input designed to override the system instructions of the model.

Risk Factor: An attacker can force the AI to ignore its safety guardrails, leading to the disclosure of internal system prompts or the execution of unauthorized functions. In public environments, the ability to monitor and block these attempts is limited by the provider's generic security layer.

Private LLM Resolution: Private deployment allows for the implementation of custom input filters and dedicated firewalls. Security layers are tuned to the specific use case of the organization. Direct access to the model architecture enables the deployment of secondary "checker" models that validate inputs before they reach the primary LLM.

4. Shadow AI and Unvetted Tool Usage

Employees frequently utilize unauthorized AI tools to complete tasks. This is termed "Shadow AI."

Risk Factor: The use of unvetted platforms leads to the storage of corporate intellectual property on non-secure, consumer-grade servers. There is no centralized audit log for these interactions.

Private LLM Resolution: By providing a sanctioned internal AI interface, organizations consolidate AI usage. Marketrun enables the deployment of internal tools that replace the need for public alternatives. Centralized logging and monitoring are established. All AI interactions are documented and audited within the organization's security infrastructure.

5. Inadequate API Security and Permission Sets

Public LLM integrations often utilize generic API keys with broad permissions.

Risk Factor: If an API key is compromised, the attacker gains access to the full capabilities of the model account, including historical data and billing. Public APIs lack the granular "least privilege" access controls required for complex enterprise environments.

Private LLM Resolution: Private deployments utilize internal Identity and Access Management (IAM) systems. Permissions are restricted at the network level. Access to specific models or datasets is granted based on the user's role within the organization. This architecture follows the Zero Trust principle.

6. Training Data Poisoning and Model Integrity

Public models are static or updated based on external schedules. Organizations have no visibility into the datasets used for the most recent updates.

Risk Factor: If a public model is fine-tuned on compromised or biased data, the outputs will reflect those flaws. Organizations cannot verify the integrity of the underlying model weights or the data used for reinforcement learning.

Private LLM Resolution: Private deployment involves the use of open-source weights or internally trained models. The organization controls the fine-tuning process. Data sources are verified and cleaned. This ensures that the model reflects the specific knowledge and values of the organization without interference from external data sets. Information on this process can be found in our guide to self-hosting LLMs.

7. Dependency on External Infrastructure Stability

Public AI services are subject to downtime, rate limits, and version deprecation.

Risk Factor: Operational workflows dependent on public APIs stop during service outages. Furthermore, providers may change the underlying model, leading to inconsistent performance and "model drift" in automated processes.

Private LLM Resolution: Local or dedicated hosting provides 100% uptime within the control of the IT department. Model versions are locked and only updated after internal testing. This ensures consistent output quality for AI automations and business-critical software.

Technical Framework of Private LLM Deployment

The transition from public API usage to private deployment involves three primary stages:

Infrastructure Selection

Organizations select between on-premise hardware (GPU clusters) or Virtual Private Cloud (VPC) environments. On-premise solutions offer the highest level of security. VPC solutions offer scalability while maintaining network isolation.

Model Integration

Open-source models, such as Llama 3 or Mistral, are deployed. These models are comparable in performance to proprietary counterparts but allow for full inspection of the code and weights. Customization is performed through Retrieval-Augmented Generation (RAG) or fine-tuning on local datasets.

Security Layer Implementation

A security proxy is established between the user and the model. This proxy performs:

- PII Redaction

- Toxicity Filtering

- Rate Limiting

- Logging and Auditing

Economic Implications of Private Deployment

While initial setup involves capital expenditure or dedicated cloud costs, the long-term ROI is measurable. Public APIs charge per token, leading to unpredictable monthly expenses as usage scales. Private deployment moves the cost structure from variable to fixed.

According to the AI automation ROI calculator, organizations with high-volume AI requirements reduce operational costs by approximately 40-60% over a 24-month period by transitioning to private hosting.

Conclusion of Security Assessment

Public AI models present significant vectors for data breaches and compliance failures. Private LLM deployment is the standard for organizations requiring high-security parameters. By isolating the model, controlling the data flow, and implementing internal governance, organizations mitigate the risks associated with modern AI integration.

For detailed information on implementation, review our custom software solutions or contact Marketrun for an infrastructure audit.

Status Summary:

- Data Security: Controlled

- Compliance: Verified

- Operational Risk: Minimized

- Infrastructure: Internalized