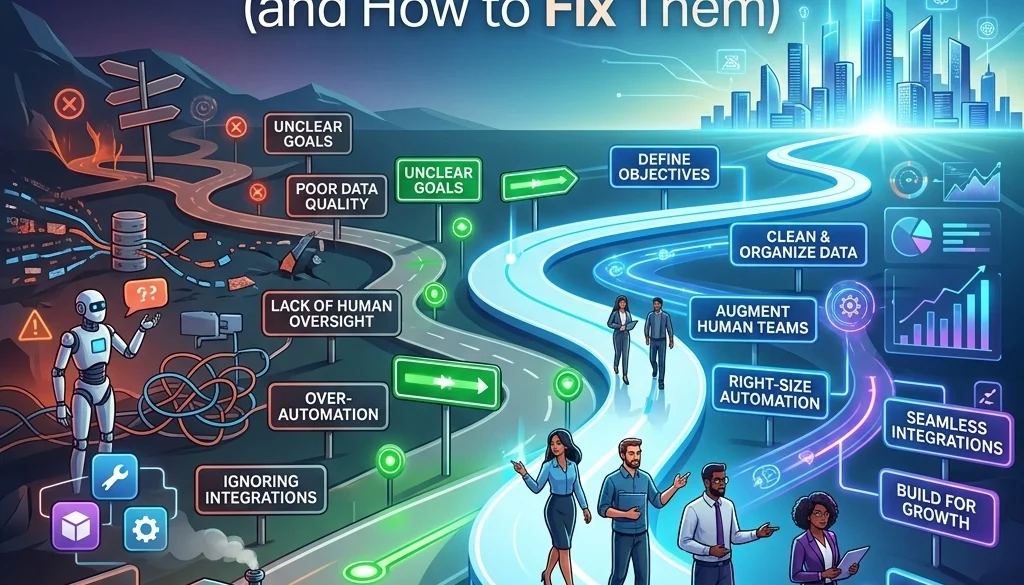

7 Mistakes You’re Making with AI Automation Workflows (and How to Fix Them)

1. Implementation of Automation on Faulty Manual Processes

Status: Critical Failure Point.

The application of AI automation workflows to inefficient manual systems results in the accelerated execution of errors. Automation serves as a force multiplier; when applied to a broken logic sequence, the volume of defective output increases proportionally.

Observed Indicators:

- Inconsistent data entry points.

- Undefined decision criteria for task escalation.

- Redundant approval layers that lack functional purpose.

Corrective Actions:

- Document the current manual process using standard flowchart protocols.

- Identify stages where human intervention is required due to lack of clarity.

- Remove redundant steps.

- Verify that the process logic is deterministic before introducing AI agents for business tasks.

- Execute a manual dry run of the optimized path.

Standardizing the workflow ensures that tools like n8n operate on a stable foundation. Processes must be functional before they are automated. Detailed guidance on structuring these systems is available at Marketrun AI Automations.

2. Deployment of Full Autonomy Without Incremental Transition

Status: Risk Assessment Warning.

The immediate transition from manual operation to fully autonomous AI agents often leads to unmonitored system drift. SMBs frequently attempt to remove human oversight before the AI model has demonstrated consistent reliability in specific operational contexts.

Technical Risks:

- Generation of hallucinated data in customer-facing outputs.

- Execution of unauthorized API calls.

- Erosion of brand consistency.

Correction Protocol:

- Phase 1 (Drafting Mode): The AI generates the output. A human operator reviews and publishes.

- Phase 2 (Supervised Autonomy): The AI operates independently but flags low-confidence outputs for manual review.

- Phase 3 (Full Autonomy): The AI operates without intervention, supported by automated logging.

Maintain a supervised state for 30-60 days to establish a performance baseline. Data on scaling these transitions can be found in the AI Agents and Automations Guide 2026.

3. Neglecting Data Hygiene and Quality Standards

Status: Primary Root Cause of Failure.

Data quality is the fundamental determinant of success for AI automation workflows. Inaccurate or unstructured data leads to incorrect logic execution and unreliable outputs from Large Language Models (LLMs).

Data Failure Statistics:

- 85% of AI project failures are attributed to poor data quality.

- Inconsistent categorization prevents accurate routing.

- Outdated knowledge base articles produce obsolete responses.

Rectification Requirements:

- Perform a comprehensive data audit.

- Standardize input formats across all CRM and ERP systems.

- Implement data validation nodes within n8n workflows to catch anomalies before they reach the LLM.

- Regularly update internal documentation used for Retrieval-Augmented Generation (RAG).

Efficient data management allows for significant time savings, often reaching 10-20 hours per week for SMB operations. Evaluate potential returns using the AI Automation ROI Calculator.

4. Underestimation of Integration and API Architecture

Status: Systemic Bottleneck.

AI agents for business require access to disparate data silos to function effectively. Failure to correctly map API integrations results in incomplete context and system timeouts.

Common Integration Errors:

- Ignoring API rate limits of third-party platforms.

- Incompatible JSON schemas between triggers and actions.

- Lack of error-handling paths for failed connections.

Technical Fixes:

- Utilize middleware such as n8n to centralize integration logic.

- Map all required data fields and ensure type consistency (e.g., string vs. integer).

- Implement "Wait" or "Retry" nodes to manage API rate limiting.

- Securely store credentials using environment variables or encrypted vaults.

For complex architectural needs, refer to Marketrun Custom Software.

5. Absence of Continuous Performance Monitoring

Status: Operational Oversight.

The "set and forget" mentality leads to gradual performance degradation. AI models and external APIs change over time, requiring consistent oversight to ensure the workflow remains within specified parameters.

Consequences of Zero Monitoring:

- Unnoticed failures in scheduled triggers.

- Increased latency in response times.

- Cost overruns due to inefficient prompt tokens or recursive loops.

Optimization Steps:

- Establish a centralized logging system.

- Set up automated alerts for workflow errors via Slack or email.

- Conduct weekly reviews of AI-generated logs to identify recurring errors.

- Analyze token usage to optimize cost-efficiency.

Monitoring ensures that the 10-20 hours saved weekly do not result in technical debt. Detailed strategies are available at Marketrun Solutions.

6. Construction of Monolithic "Monster Workflows"

Status: Maintenance Risk.

Designing a single, expansive workflow to handle multiple complex tasks increases the difficulty of debugging and maintenance. A failure in one minor branch can terminate the entire process.

Characteristics of Monolithic Workflows:

- Excessive nodes within a single canvas.

- Multiple triggers feeding into a single logic stream.

- Difficulty in isolating the cause of a specific failure.

Structural Correction:

- Implement a modular architecture.

- Use "Execute Workflow" nodes to trigger smaller, specialized sub-workflows.

- Isolate complex logic into discrete services.

- Maintain a master workflow that acts as a router.

This modular approach facilitates easier updates and scalability. Specialized deployment information is listed under Open Source Deployment.

7. Omission of Human-in-the-Loop (HITL) Checkpoints

Status: Quality Control Deficit.

Autonomous systems operating without human checkpoints carry high operational risk, particularly in sensitive areas such as finance, legal, or direct customer communication.

Impact of Omission:

- Public dissemination of incorrect information.

- Unintended financial transactions.

- Legal liability from automated decision-making.

Design Fixes:

- Insert manual approval nodes for high-stakes actions.

- Configure the system to require human verification when AI confidence scores fall below a defined threshold (e.g., < 0.85).

- Build escalation paths where the AI can hand over a task to a human agent when complexity exceeds the model's capabilities.

Summary of Technical Requirements for SMB AI Implementation

To achieve a reduction of 10-20 hours of manual labor per week, the following technical standards must be adhered to:

| Component | Standard |

|---|---|

| Logic | Deterministic and verified before automation. |

| Autonomy | Phased implementation with initial supervision. |

| Data | Audited, cleaned, and standardized. |

| Integrations | Documented API schemas and rate-limit handling. |

| Monitoring | Automated logging and error alerting. |

| Architecture | Modular sub-workflows rather than monolithic structures. |

| Governance | Human-in-the-loop checkpoints for critical outputs. |

Marketrun provides the necessary infrastructure for these implementations through AI Development and AI Website Creation.

For organizations requiring localized expertise, resources are partitioned for US Clients and India Clients.

For further technical exploration regarding self-hosted solutions, view the Self-Hosting LLMs 2026 Guide.

Conclusion of Technical Assessment

The successful deployment of AI automation workflows requires a shift from experimental usage to rigorous systems engineering. By addressing these seven common errors, SMBs can stabilize their operations and realize the full efficiency potential of AI agents for business.

For pricing and consultation, visit Marketrun Pricing. Further documentation and case studies are available in the Marketrun Blog.